In a bid to maintain a safe and responsible content environment, popular social media platform TikTok is swiftly addressing concerns by ‘aggressively’ removing videos that promote Osama Bin Laden’s ‘Letter to America.’ This article delves into TikTok’s proactive measures, the significance of combating the dissemination of extremist content, and the ongoing challenges faced by social media platforms in moderating their vast user-generated content.

Recent reports highlight TikTok’s proactive approach in aggressively removing videos that propagate Osama Bin Laden’s ‘Letter to America.’ The swift action is in line with TikTok’s commitment to ensuring a secure and positive user experience, particularly in matters related to extremist content or materials that may pose a risk to public safety.

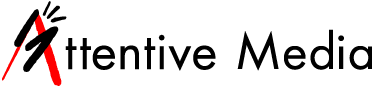

Osama Bin Laden’s ‘Letter to America,’ penned in 2002, is considered a foundational text for extremist ideologies and has been utilized by various groups to spread radical propaganda. Its promotion on social media platforms raises concerns about the potential impact on vulnerable audiences and the risk of fostering extremist sentiments. TikTok’s determination to curb the dissemination of such content is crucial in mitigating the influence of extremist ideologies within its user community.

Social media platforms, including TikTok, continually grapple with the challenge of moderating vast amounts of user-generated content. The dynamic and evolving nature of online content, coupled with the speed at which it is shared, makes it challenging for platforms to stay ahead of potentially harmful materials. The ‘Letter to America’ issue underscores the ongoing struggle faced by platforms to strike a balance between freedom of expression and preventing the dissemination of extremist content.

The removal of videos promoting Bin Laden’s ‘Letter to America’ raises important questions about how social media platforms navigate the delicate balance between allowing freedom of expression and ensuring user safety. While platforms aim to provide spaces for diverse perspectives, they must also contend with the responsibility to curb the spread of content that can incite violence or contribute to the radicalization of individuals.

TikTok’s swift action against videos promoting extremist content demonstrates its commitment to creating a safe and inclusive online environment. The platform employs a combination of automated systems and human moderators to identify and remove content that violates community guidelines. This multi-faceted approach reflects the ongoing efforts of social media platforms to stay ahead of emerging threats and safeguard their user communities.

In addition to the platform’s internal content moderation mechanisms, TikTok relies on community reporting to identify and address potential violations. Empowering users to report problematic content plays a crucial role in holding individuals accountable for sharing materials that may pose risks to public safety. The collaboration between the platform and its user base is integral to the ongoing efforts to maintain a responsible and secure online space.

TikTok’s assertive stance in swiftly removing videos promoting Osama Bin Laden’s ‘Letter to America’ underscores the platform’s commitment to user safety and responsible content moderation. As social media platforms navigate the complexities of moderating vast amounts of user-generated content, the challenges persist in balancing freedom of expression with the imperative to prevent the spread of extremist ideologies. The ongoing evolution of content moderation strategies will continue to be a crucial aspect of ensuring a positive and secure online environment for users worldwide.